Music is believed to have existed in some form since prehistoric times. The earliest human civilizations are believed to have used fundamental music techniques (creating rhythms and vocal expressions, for example) for practical and, increasingly throughout history, aesthetic purposes. It is believed to have served as a tool of survival in ancient tribal communities, as well as a means of recreation and social connection among human cultures.

Music unites people. It informs and affirms cultural identity, and it stimulates and inspires the human mind. From the medieval period, to the classical era, to the modern and postmodern ages, and beyond–music has evolved to incorporate different sounds, scales, and instrumentation into new forms and new applications, which are soaring to great new heights in music technology.

The Modern Instrument

10 Obscure Electronic Musical Instruments

Generally speaking, musical instruments as they exist today can be reliably broken down into several major categories; including percussion instruments, wind instruments, stringed instruments, and most recently, electronic instruments. And while the aforementioned acoustic instruments have given us centuries of musical traditions and techniques upon which to draw, there seems to be no limit to the possibilities that modern electronic musical instrumentation presents. Now, people can simply access nearly any type of audio imaginable directly from their browser using easily available software—such as FLVTO, which converts streaming media from the web to MP3 files.

Although music is an art form that can be highly abstract in nature, it is made up of several quantifiable components. The basics of music can be broken down to rhythm (the beats, timing and accents of music), melody (patterns and variations in pitch/melody that are expressed over time), and harmony (musical notes played in concurrence with one another). Thus, rhythm and melody are temporal musical qualities, while harmony could be regarded as a spatial quality.

Going a little deeper, the fundamentals of sound are a bit more complex and multifaceted. Simply put, sound is the manner in which certain types of fluctuations in atmospheric pressure are perceived by the human ear, and in turn, the brain and nervous system. These fluctuations (called sound waves) are made up of various simple waves (called sine waves) which, compounded with one another, are perceived by the ear as sound.

Sound waves can be created using various methods. Oscillation, for example, generates sound by accelerating simple, repetitive noises (such as sine waves) until the repetition is sped up so fast that it sounds like a continuous note. The pitch of this note is determined by its frequency, which is the measurement of how many times the wave repeats. The higher the frequency, the higher the pitch of the note.

Audio synthesis is the process of combining sine waves in ways designed to either create new sounds, or emulate existing sounds and/or musical instruments. Two basic forms of audio synthesis include the additive and subtractive methods. The additive method basically adds sine waves together to create timbre, while subtractive synthesis does so by filtering out partials (sine waves that make up a group, or complex sound wave) from an audio source.

Top 10 Digital Musical Instruments

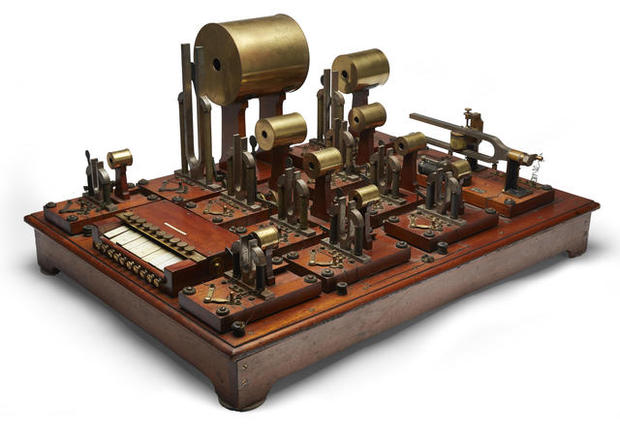

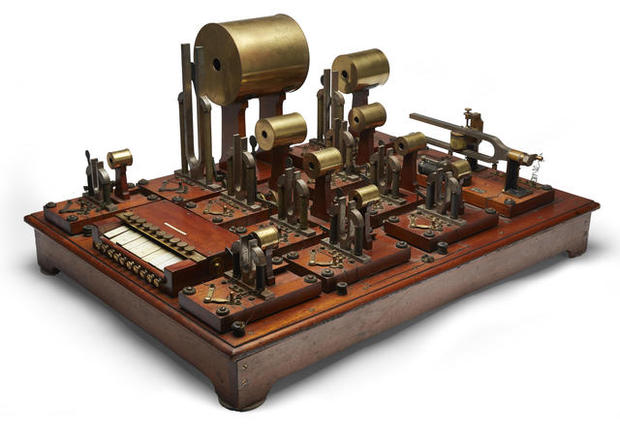

Over the course of the twentieth century, the synthesizer became a defining feature of modern music. Prior to its rise to the mainstream, however, there were a number of innovations in electronic music creation that preceded it. Mechanical oscillators were developed in the late nineteenth century, and automated player pianos existed and were put into use by the 1900s. The early twentieth century also brought about the invention of the Theremin–a unique instrument that employs two antennae, one for amplitude and the other for pitch–which was created by mistake when a Russian scientist was researching proximity sensors.

Other electronic music innovations from around that period included various early forms of the electronic organ (which employed both additive and subtractive synthesis), as well as the incorporation of developing technologies such as the vacuum tube, telegraphy and optoelectronics. By the mid-twentieth century, electronic keyboard instruments were beginning to come into popular use.

Shortly thereafter, an American electrical engineer, computer scientist and musician by the name of Max Mathews started developing computer music. Mathews was an employee at Bell Labs who wrote the first computer software programs that could perform programmed music. Educated at the California Institute of Technology and the Massachusetts Institute of Technology, he set the stage for the future of computer music as well as much of what is now commonly referred to as human-computer interaction.

Around the same time that Mathews developed the earliest computer music programs, American engineer and pioneer of electronic music, Robert Moog, developed his Moog analogue synthesizer, which employed subtractive synthesis and voltage-controlled oscillators. The resultant sound was similar to the Theremin, which had become very popular in film scoring (particularly science fiction films). And while Moog could not seem to endear his device to film composers as much as its predecessor had, the Moog synthesizer would soon briefly become a staple of rock music in the 1970s.

This decade also saw the rise of dance music, which employed synthesized instrumentation to an unprecedented degree. Following that, the development of hip hop, techno, industrial and noise music throughout the 1980s explored many new implementations of electronics in music–such as sampled audio and programmed drums.

The Musical Instrument Digital Interface (MIDI) then introduced a new standard into the macrocosm of music technology, which digitized fundamental musical characteristics (such as pitch and tempo) to be read in turn by a MIDI capable device, but with variable instrumentation. The standard gradually evolved to incorporate new plugins and functionality, and remains a stock feature on many digital music products to this day.

Now there are countless ways to generate music using electronic means. The digital revolution has wrought a wide variety of new devices for digital recording. Transitioning into the 21st century, recording technology consolidated many elements that had previously been compartmentalized and separated in older and more primitive recording environments. Mixers and multi-track recorders merged into standalone units. And as digital music has evolved; recording, mixing and mastering has become possible within a single digital interface–now commonly referred to as the Digital Audio Workstation (DAW).

The rise of internet culture has facilitated a number of ways to create and/or collaborate on music via the world wide web. HTML5 and Web API have paved the way for in-browser audio interfaces, empowering users to create, edit and orchestrate sounds; while also enabling easy collaboration between remote users.

And perhaps the defining quality of the digital music revolution is the ease and accessibility with which media can now be transmitted, shared, remixed, and redistributed. The FLVTO online MP3 converter, for example, outputs MP3 audio files from various streaming ingress sources (such as Youtube), allowing users to store audio directly from their browser.

Some may say that the music industry is dying, but it is merely changing. While business structures and protocols are being reimagined for the sake of content monetization and digital rights management; the technology of creating and distributing music via digital means and avenues proceeds to evolve at a rapid pace. FLVTO is proof of the enduring innovation in truly user-oriented music technology. With one simple plugin, users can easily source content from their browser to store and enjoy in a compact and convenient format.