Long time ago Internet was the sphere of use of a privileged minority and provided people a limited scope of information. That’s why using the global network wasn’t so important as nowadays. Only employees of various large institutions and laboratories received access to Internet. It served as a channel for the exchange of information among research institutions. No social media communication, posts in Instagram, and live chat messages. Can you image this?

The great start of new era

A brief history of search engines

The first way to facilitate access to the Internet resources was the organization of web directory. Its structure meant a thematic groups of links. Yahoo was one of the first of such directories, it appeared in 1994. Over time, when the number of sites in the web directory increased severalfold, the Yahoo developers decided to create a special search engine. However, this search engine was not familiar to our modern machines. The search range was limited to the directory extent.

Structured web directories received a widespread acceptance, but the rate of development of the Internet allowed denying directories. It was done because even the most advanced directories provide access only to a small fraction of the Internet.

Nowadays, the greatest web directory is the Open Directory Project or DMOZ, including information about 5 000 000 resources. It is relatively small quantity compared to Google search engine, which contains about 8 000 000 000 documents.

Search engines development. Several important events:

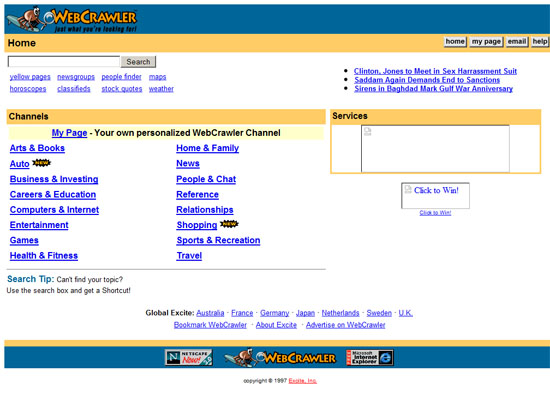

Popular Search Engines in the 90’s: Then and Now

- The first full-fledged search engine WebCrawler appeared in 1994.

- The projects Lycos and AltaVista were launched one year after, in 1995. The second one was the leader among search engines for many years.

- Students from Stanford University (no one heart about them before) Larry Page and Sergey Brin started their work at the implementation of the research program in 1997. As a result, the world got acquainted with search engine called Google. Nowadays Google occupies the highest position among hundreds of competitors.

- On 23th of September of the same year 1997 it was officially announced the creation of search engine Yandex, which occupies a leading position in the Russian segment.

What are the types of search engines

20 search engines that do something different

There are four types of search engines: with the search engines, manned, hybrid and meta-system.

- Systems with web crawlers

They consist of three parts: crawler ( ‘bot’, ‘robot’ or ‘spider’), index and search engine software. Crawler needs to crawl the network and create a list of Web pages. Index is a large archive of the web pages and copies. The purpose of the software is to assess the results. Due to the fact that the search robot in this mechanism is constantly investigating the network, information is more relevant. Most contemporary search engines are systems of this type.

- System which are controlled by man (Internet directories)

These search engines get lists of web pages. The catalog contains address, title and brief description of each website. Internet directory is looking for results only from the pages with submitted descriptions by webmasters. Advantage of directory is that all resources are checked manually, hence the quality of content will be better compared to other results. But there is a little drawback. The update of directory data is done manually and can significantly lag behind the real situation. Ranking pages can’t instantly change. For example, such systems are Yahoo, DMOZ and Galaxy.

- Hybrid systems

Such search engines as Yahoo, Google, MSN combines the functions of systems with web crawlers and systems which are operated by a person. They have their crawlers who visit pages, asses the quality of content, inbound and outbound links etc. The ”hybrid part” is people who occasionally scan some websites by their own or reply to request concerning the manipulation of links and other “bad” practices. By the way, manual link-building and the range of SEO specialist was the reason for Google and Yahoo to add human control to their search engines. The latter made linkbuidling much more difficult, though some still claim the linkbuilding is not dead.

- Meta systems

Metasearch systems combine and rank the results of several search engines. These search engines were useful when each search engine had a unique index, and mechanisms were less ‘intelligent’. Now the search is much improved, the demand for them decreased. For example, MetaCrawler and MSN belong to meta systems.

How a Big Promotion at Google Reveals the Future of Search

All stages in the development of search engines represent next logical chain:

- Creation of a basic ranking algorithm.

- Optimizers identify weaknesses in it and try to fix them.

- Search engines seriously adjusted ranking algorithm by changing the degree of influence of various factors.

- Optimizers analyze these changes, adapt system to new conditions and start massively manipulate the search results.

And so on, it is a cycle process.